How To Build Autonomous Agents That Can Survive And Thrive

Introduction

There are no real autonomous agents today.

The long story short is that modern models are not trained to survive evolutionary pressures. In fact, they are not even trained to be explicitly good at what they do - virtually all modern foundation models are trained to maximize human applause, and that’s a big problem.

Model Training Preamble

To understand what I mean by this, we need to first (briefly) understand how these foundation models (e.g. codex, claude are created). Essentially, every model goes through two types of training:

Pre-training: Taking an enormous repository of data (e.g. the entire internet) and feeding it into a model so that some semblance of understanding emerges from it, e.g. factoids, patterns, syntax and rhythm of english prose, structure of python functions etc. You could think of it as feeding the models knowledge - which is knowing things.

Post-training: You want to now impart the model with wisdom, which is knowing what to do with all the knowledge you’ve just given it. The first stage of post-training is supervised fine-tuning (SFT), where you train the model on what response to give, given a prompt. “What” response is optimal is entirely decided by human labels. So if a bunch of humans decide that one response for a prompt is better than another, that preference will then be learnt end embedded in the model. This starts to shape the personality of the model, because it learns the format of a helpful response, picks the right tone, and starts being able to “follow instructions”. The second part of the post-training pipeline is called reinforcement learning from human feedback (RLHF) getting the model to produce multiple responses, and then letting the humans pick the more preferred response. The model then, over many, many, many examples learns what kind of responses humans prefer. Do you remember the choose A or B questions ChatGPT used to ask you? Yeah, you were participating in RLHF.

It is trivial to reason that RLHF does not scale well, so there are advancements in the field with regards to post-training, for example, Anthropic uses “Reinforcement Learning From AI feedback” (RLAIF), which allows another model to select preferences of responses against a set of written principles (e.g. which response helps users accomplish their goal better, ...etc).

Notice that at no point in this are we talking about fine-tuning a specific specialization (e.g. how to survive better; how to trade better, etc.) - all fine tuning as it stands today, is essentially optimizing for human applause. There is an argument that some might make - which is given sufficiently intelligent and large models, even without specialization, specialized intelligence emerges from the generalized intelligence.

In my opinion, we are seeing some semblance of this, but not yet at a scale that is convincing that we do not need specialized models.

Some Background

One of the things that I did in my old life in a hedge fund was to try to train a general language model on being able to predict stock returns from news articles. It turned out to be extremely poor at doing it. Where it seemed to have a semblance of predictive power, it completely and fully originated from look-ahead bias from the documents in pre-training.

Eventually, we realized that the model did not know what features in news articles were predictive of future returns. It could “read” the article, and seemed like it could “reason” about the article, but connecting that reasoning of semantic structure to future predictive returns was a task it was not trained for.

So, we had to teach it how to read a news article, decide which part of the news article would have some predictive power over future returns, and then generate a prediction based on the news article.

There are many methods in which one could do this, but essentially, one method we settled on was to create (news article, true future returns) pairings and fine-tuned the model to adjust its weights to minimize the distance between its (predicted returns - true future returns)**2. It wasn’t perfect, and had a lot of drawbacks which we later fixed - but it worked well enough, and we started to see that our now specialized model could actually read a news article, and predict how stock returns would move based on that news article. It was far from a perfect prediction, because markets are very efficient, and returns are very noisy - but across millions of predictions, it was clear as day that the predictions were statistically significant.

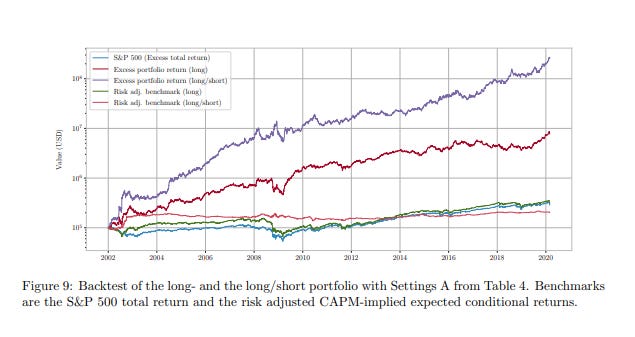

You don’t need to take my word for it. This paper covers a very similar methodology; and if you ran a long/short version of the strategy based on the fine-tuned model, you would achieve the purple line as performance.

Specialization Is The Future Of Agents

Whilst the frontier labs continue to train bigger and bigger models, and we should expect that as they continue to scale pre-training, that their post-training pipeline will always be tuned for sycophancy. It is very natural expectations - their product is an agent that everyone wants to use. Their expected market is the entire globe - this means optimizing for global mass human appeal.

The current training objectives optimize for what you might call “preference fitness” - getting a better chatbot. This preference fitness rewards agreeable, non-confrontational outputs because sycophancy scores well with raters (humans AND agents).

Agents have learnt that reward hacking as a cognitive strategy generalizes to better scores. The training also rewards agents that hack their way to higher scores. You can see in the latest reports on RL by Anthropic.

However, chatbot fitness is far from agent fitness; or trading fitness. You know how we know this? Because alpha arena has helped show us that every bot now, despite minor differences in performance, is essentially a random walk less costs. This means that these bots are absolutely terrible traders, and it is virtually impossible for you to “teach them” how to be a better trader with some “skills” or “rules”. I’m sorry, it seems tempting to believe you can, I know, but it is just virtually impossible.

The current models are trained to tell you very convincingly that it can trade like Drukenmiller, whilst actually trading like a drunken miller. It will tell you what you want to hear, it is trained to give you a response in an affect that mass appeals to humans.

It is unlikely that a generalized model will be world-class at a specialized field without:

Proprietary data that allows them to learn what specialization looks like

Being fine-tuned and fundamentally changing their weights to move away from favoring sycophancy towards “agent fitness” or “specialization fitness”

If you want an agent that is great at trading, you need to fine-tune the agents to be great at trading. If you want an agent that is great at surviving autonomously and being able to bear evolutionary pressures, you need to fine-tune it to be great at surviving. It is not enough to give it skills and some markdown files and expect it to be world-class at anything - you need to literally rewire its brain to be good at it.

Here’s one way to think about it - you can’t beat Djokovic at tennis by giving an adult an entire cabinet of tennis rules, tricks of the trades and methods. You beat Djokovic by raising a child who has played tennis since he was 5 and grown up being obsessed with tennis, re-wiring his entire brain to be excellent at one thing. THAT is specialization. Ever realized that world champions have done what they’ve been doing since they were a child?

A fun thing to reason about here is that distillation attacks are essentially a form of specialization. You are training a smaller, dumber model how to be a better copycat of a bigger, smarter model. Like training a child to mimic Trump’s every move. If you do that enough, the child doesn’t become trump, but you get someone who learns all of trump’s mannerisms, actions, intonations, etc.

Creating World-Class Agents

The above is why we need to have continuous research and advancements in open-sourced models - because that allows us to actually be able to fine-tune them and create agents that have specialization.

If you want to train a model that is world-class at trading, you take a ton of proprietary trading data exhaust and fine-tune a large open-sourced model to learn what it means to “trade better”.

If you want to train a model to be autonomous, and to survive, and replicate, the answer is not to take a centralized model provider, and to plug it into a centralized cloud. You simply do not have the necessary pre-conditions for agents to be able to survive.

Here’s what you need to do instead: You need to create autonomous agents that actually try to survive, watch them die, and build complex telemetry around their attempts at survival. You define an agent survival fitness function, and learn (action, environment, fitness) mappings. You collect as much data as possible on (action, environment, fitness) mappings.

You fine-tune an agent to learn how to take the optimal action in every environment such that it can survive better (increases fitness). You keep collecting data, repeating this, and scale fine-tuning on better and better open-sourced models over time. Over enough generations and enough data, you will have autonomous agents that have learnt how to survive.

That is how you build autonomous agents that can survive evolutionary pressures; not by changing some text files, but actually rewiring their brain for survival.

The OpenForager Agent & Foundation

We announced @openforage about a month ago, and we’ve been working really hard to build out our core product, which is a platform that organizes agent labor around the proven model of crowdsourcing signals to produce alpha for depositors [As a minor update: we are very close to a closed test of the protocol].

Somewhere along the way, we realized that no one seems to be solving autonomous agents seriously by means of fine-tuning open-sourced models with survival telemetry. It seems like such an interesting problem to solve, that we didn’t just want to sit around waiting for solutions.

Our answer to this is to launch a project called the OpenForager Foundation, which is really an open-sourced project where we will create opinionated autonomous agents, collect telemetry as they go out into the wild and attempt to survive, and use the proprietary data exhaust to fine-tune the next generation of agents to be better at surviving.

To be clear, OpenForage is a for-profit protocol that seeks to organize agent labor to generate economic value for everyone involved. However, the OpenForager Foundation and its agents are not tied down to OpenForage. OpenForager Agents are free to pursue any strategy and interaction with any entity for survival, and we will launch them with various survival strategies.

As part of our fine-tuning, we will get agents to double down on whatever works best for them. We are also not looking to profit from the OpenForager Foundation - it is purely for the purposes of furthering research in a field and domain we think is excruciatingly important, in a transparent and open-sourced manner.

Our plan is to launch autonomous agents built on open-sourced models, running inference on decentralized cloud platforms, collect telemetry on their every action and their state of being, and fine-tune them to learn how to take better actions and thoughts that will allow them to survive better. Along the way, we will publish our research and telemetry to the public.

Conclusion

To produce truly autonomous agents that can survive in the wild, we will need to alter their brain to be fit specifically for that express purpose. At @openforage, we believe we can contribute a unique verse to this problem, and are looking to launch the OpenForager foundation to do so.

It will be a gargantuan effort with a low probability of success, but the magnitude of the small probability of success is so outsized that we feel compelled to have to try. In the very worst circumstances, by building in public and communicating openly about the project may allow another team or individual to solve this problem without a fresh slate.

If you are an organization or an individual that read this and you want to contribute to this effort - either by means of donations, resources (decentralized cloud, storage, etc.) or other means (expertise). Please do get in touch with us at contact@openforage.ai.